Cold, spun-down disks

A power-saving NAS parks its HDDs after idle minutes. First-access latency jumps from milliseconds to seconds as each drive spins up. Tools that read files sequentially stall on every new directory.

A NAS accumulates duplicates faster than any other storage you own. Years of phone backups, photo libraries synced from three devices, a spouse’s media library, a second copy “just in case”, a torrent that overlapped with a streaming rip — it all stacks up on the same volume and grows invisibly for years.

This page covers how DuoBolt handles NAS scanning specifically: why most duplicate finders fail on network storage, how DuoBolt’s engine was built around the problem, and a step-by-step guide for scanning Synology, QNAP, TrueNAS, Unraid, and any SMB/NFS share.

Scanning a NAS is fundamentally different from scanning a local SSD. Three things conspire against most duplicate finders:

Cold, spun-down disks

A power-saving NAS parks its HDDs after idle minutes. First-access latency jumps from milliseconds to seconds as each drive spins up. Tools that read files sequentially stall on every new directory.

Network I/O latency

Every file open, stat, and read is a round-trip over Ethernet. A local scan doing 50,000 files/sec might drop to 500/sec over SMB. Legacy tools never see saturation — they’re always waiting on the wire.

Single-threaded hashing

Most duplicate finders still hash one file at a time with MD5 or SHA-256. On a modern 8-core machine scanning a NAS, seven cores sit idle while the eighth waits on a slow network read.

The result: older tools routinely time out on terabyte-scale NAS volumes. In our benchmark suite, both dupeGuru and Gemini 2 exceeded the 15-minute cutoff on a 1 TB Synology snapshot. Czkawka completed, but took 85 seconds cold where DuoBolt took 64.

DuoBolt was designed from day one with NAS and terabyte-scale storage in mind. Four architectural decisions compound to deliver the speed advantage:

Per-root parallelism

Multiple scan roots run in parallel instead of sequentially. If you point DuoBolt at /Volumes/NAS/photos and /Volumes/NAS/videos, both walk concurrently, so the bottleneck becomes disk and network throughput — not tool pacing.

Streaming chunked I/O

Reads are chunked and streamed, overlapping disk access with hashing. While BLAKE3 processes one chunk, the next is already on the wire. CPUs stay busy; the network is never the limiter waiting for a free core.

Multi-core BLAKE3 hashing

BLAKE3 is tree-structured and multi-core by design. On an M1 Pro scanning a NAS, DuoBolt uses all performance cores in parallel to process chunks, saturating whatever I/O the NAS can deliver.

Head+tail prehash

Before any full-content hash, DuoBolt hashes just the first and last N KiB of each candidate file. Non-matches are eliminated from the candidate pool before touching the rest of the file — a massive I/O saving on large media files that look similar by size but differ at the edges.

Cold scan — 64 seconds

1.01 TB volume, HDDs spun down before the test. Mix of 605 GB video, 246 GB photos, 162 GB music. DuoBolt discovers, prehashes, full-hashes, and groups everything in just over a minute.

Warm scan — 20 seconds

Same volume, caches warmed. DuoBolt’s cache layer short-circuits unchanged files, pushing the scan under 20 seconds on subsequent runs.

For side-by-side comparison with Czkawka, dupeGuru, and Gemini 2 on the same hardware, see the full benchmark results.

The difference between a 64-second cold scan and a 20-second warm scan is not magic — it is DuoBolt’s cache layer working as designed. When DuoBolt hashes a file, it records the full-content BLAKE3 hash keyed by path, size, and modification time. On subsequent scans, files whose (size, mtime) signature is unchanged skip hashing entirely and pull their hash from the cache.

For a NAS this matters more than for any other target. Every hash retrieved from cache is one file DuoBolt does not read over the network — the single biggest win on a medium dominated by SMB or NFS latency.

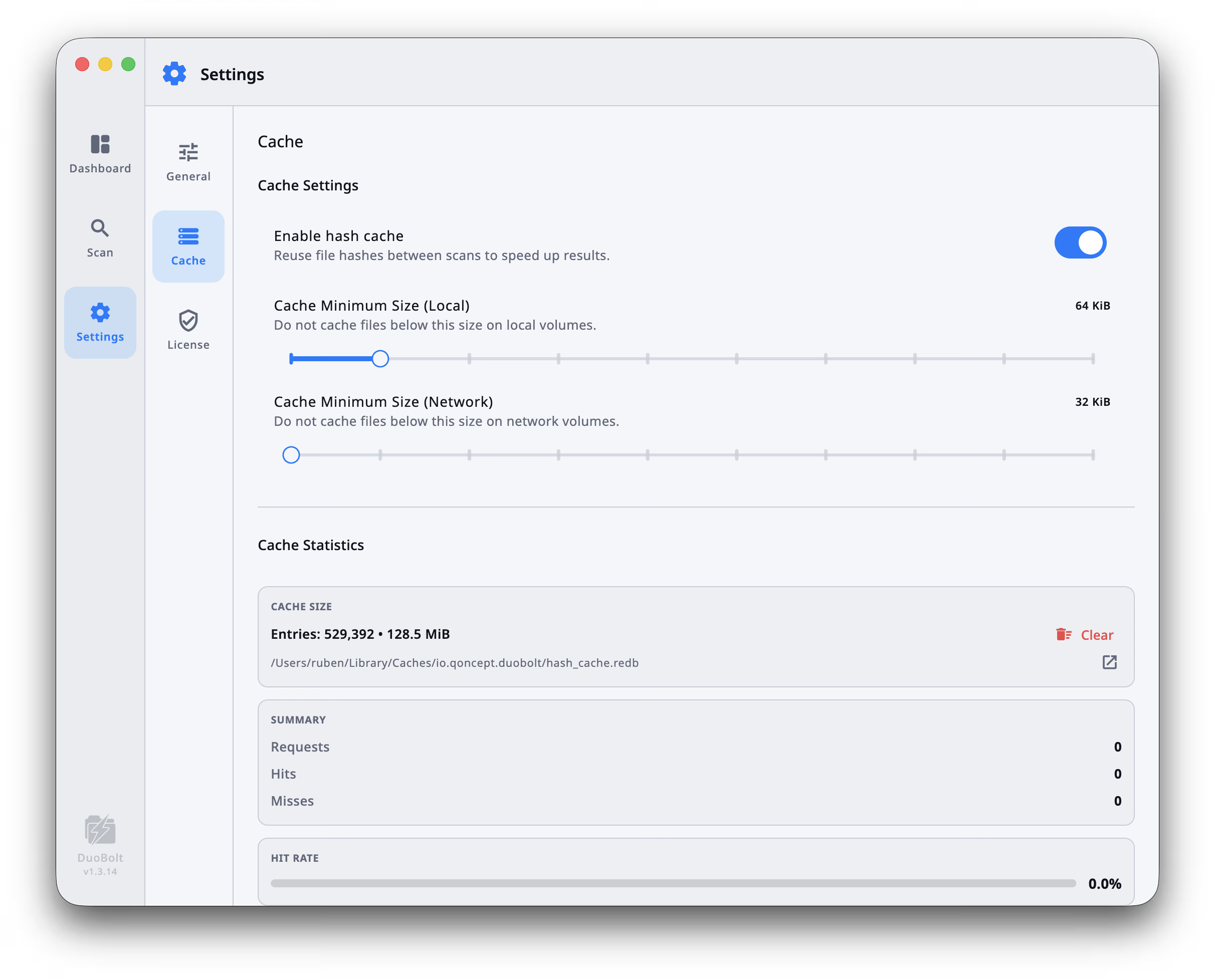

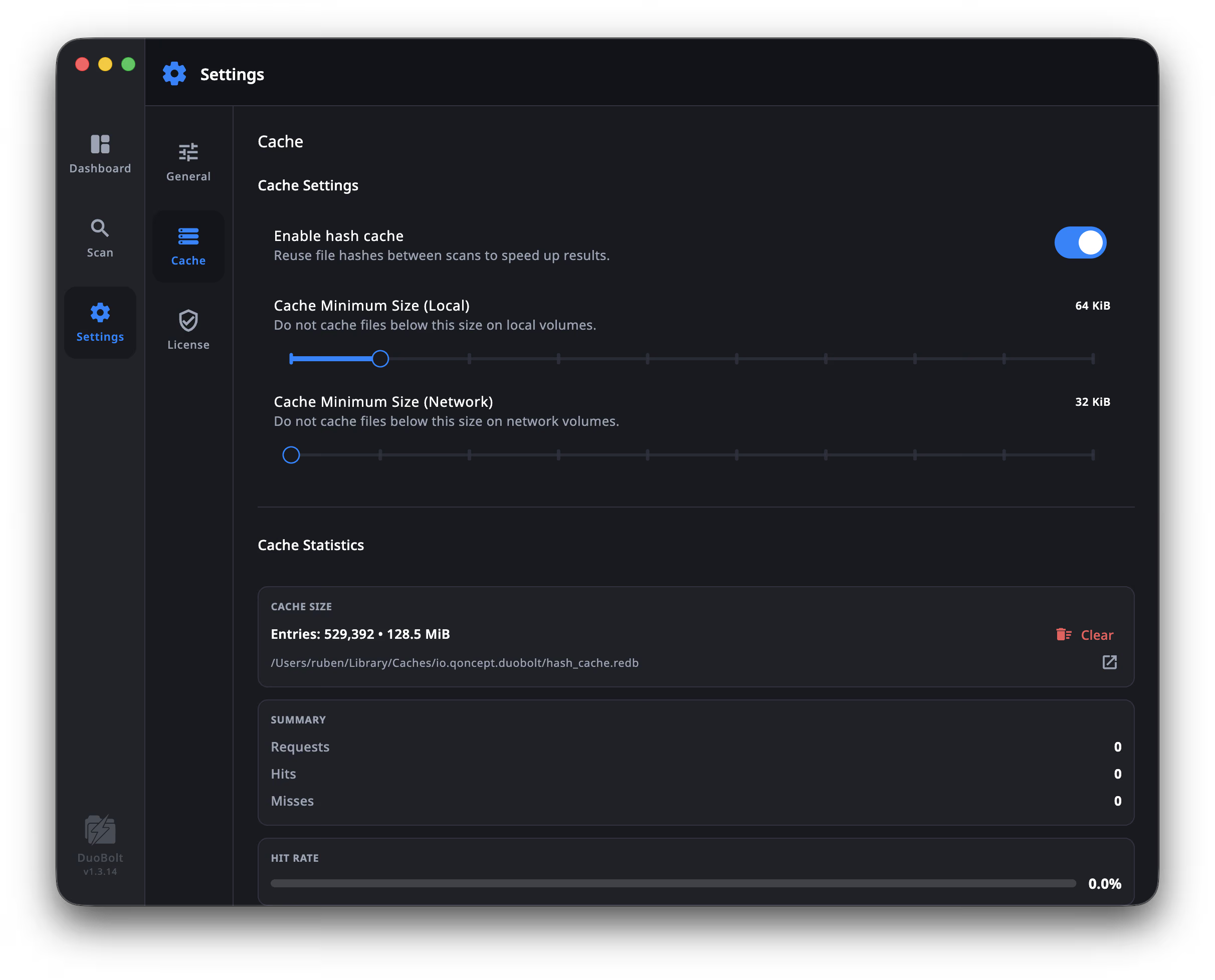

Configure the cache under Settings → Cache. Toggle the hash cache on or off, set separate minimum sizes for local and network volumes, and inspect live statistics (requests, hits, misses, hit rate) after each scan:

The Cache Minimum Size (Network) slider is the Desktop equivalent of --cache-min-size-network — lower it to cache smaller files on slow NAS shares, raise it to keep the cache lean. Cache Statistics lets you validate that warm scans are actually hitting the cache rather than re-hashing unchanged files.

The cache is tunable specifically for network volumes:

--cache-min-size-network=SIZE — only cache files larger than SIZE on network volumes, avoiding bloat from tens of thousands of tiny sidecar files.--cache-min-size-local=SIZE — separate threshold for local disks, typically set lower.--cache-max-bytes=SIZE / --cache-max-entries=N — hard ceilings when scanning multi-terabyte datasets.--cache-stats — inspect hit ratio after a scan to validate warm-up effectiveness.--cache-clear — full invalidation (rarely needed; DuoBolt auto-evicts stale entries when mtime changes).See the CLI reference for the complete flag list.

Mount the share on your machine

Cmd+K → smb://your-nas.local/volume (or afp://, nfs://)Open DuoBolt and click Add Folder

Navigate to the mounted share and select the directory you want to deduplicate (e.g., /Volumes/NAS/photos).

Configure filters (optional but recommended)

1 MiB or higher to skip thumbnails and metadata files.photoslibrary, .aplibrary, .tmbundle (macOS managed bundles)Run the scan

First scan is “cold” — expect the times shown in our benchmark. Subsequent runs benefit from DuoBolt’s cache layer.

Review before deleting

Mount the share first (see Desktop tab), then run:

duobolt-cli /Volumes/NAS/media \ --min-size=1M \ --ignore-system-files \ --ignore-hidden-files \ --output=json \ --quiet > nas-dupes.jsonThe CLI never deletes files — it produces a report only. Parse the JSON in your script and decide what to remove manually or via another tool. See the CLI reference for all options.

To automate weekly scans, wire the command into cron, launchd, or systemd:

0 3 * * 0 duobolt-cli /Volumes/NAS --output=json --quiet > ~/reports/nas-dupes-$(date +\%Y\%m\%d).jsonDuoBolt works with any share the host OS can mount — in the typical setup it runs on a desktop or server that has the NAS share mounted, not on the NAS itself. Tested and known-good:

For SMB shares, DuoBolt works transparently through the mount — there is no protocol-level optimization needed.

Most of the platforms above run a Linux-based OS and can execute the Linux CLI build directly over SSH, skipping the network mount. Windows-based appliances (rare) can use the Windows build instead. See the Download page for the full list of binaries.

To pick the right build, SSH into the NAS and run uname -m:

uname -m output | Build to download |

|---|---|

x86_64 | Linux (x64) |

aarch64 / arm64 | Linux (ARM64) |

armv7l / armv6l | Not supported |

Rough guidance if you don’t want to check first:

DuoBolt does not speak network protocols directly. It scans through whatever the host operating system mounts, so the protocol choice lives entirely on the mount side — but it materially affects scan throughput, especially on long cold scans.

When in doubt: SMB3. NFS only if you have a specific reason.

Not everything on a NAS that looks like a duplicate is one. Filesystems, backup tools, and media apps routinely store intentional hardlinks, snapshots, or managed metadata that inflate scan counts without representing real redundancy. Knowing what to skip shortens the scan and produces cleaner results.

Synology:

@eaDir/ — hidden thumbnail and metadata directory created inside every media folder. Useless to hash. Exclude with --exclude-dir-ext=eaDir or via Desktop ignore rules.#recycle/ — per-share Recycle Bin. Often holds intentional duplicates of “deleted” files; decide based on your cleanup goals.@appstore/, @database/, @tmp/ — system-managed, always skip.Filesystem snapshots (ZFS, BTRFS, Synology Btrfs):

.snapshots/, .zfs/snapshot/ — point-in-time copies of the entire volume. They look like massive duplication but represent historical state. Always exclude; otherwise every snapshot doubles the apparent duplicate count.Backup bundles:

.backupdb/ (Time Machine) — relies on OS-level hardlinks. DuoBolt’s symlink/hardlink collapse handles this correctly by default, but excluding the directory saves a lot of scan time..AppleDouble/ and ._* AppleDouble sidecars — generated by macOS on non-HFS filesystems. Covered by --ignore-system-files.Managed library bundles (macOS):

*.photoslibrary, *.aplibrary, *.itlp, *.imovielibrary — appear as single files in Finder but are massive directory trees internally. Exclude by directory extension: --exclude-dir-ext=photoslibrary,aplibrary,itlp,imovielibrary.Cross-platform noise:

Thumbs.db, desktop.ini, .DS_Store — always safe to skip. Covered by --ignore-system-files.Most of the above can be handled in one pass by toggling Ignore system files, Ignore hidden directories, and Exclude directory extensions in the Desktop app, or by combining --ignore-system-files with --exclude-dir-ext=... in the CLI.

--min-size. Thumbnails, sidecar metadata, and app config files are rarely worth hashing and inflate scan counts without reclaiming space..photoslibrary, .aplibrary, .itlp). These look massive but are not user-facing duplicates.